Upgrade from 7.7.2 to 8.0.0

1. Upgrade From 3.7.2 to 8.0.0 and 7.7.2 to 8.0.0

RDAF Infra Upgrade: 1.0.3, 1.0.3.3 (haproxy)

RDAF Platform: From 3.7.2 to 8.0.0

AIOps (OIA) Application: From 7.7.2 to 8.0.0

RDAF Deployment rdafk8s CLI: From 1.3.2 to 1.4.0

RDAF Client rdac CLI: From 3.7.2 to 8.0.0

Python: From 3.7 to 3.12

RDAF Infra Upgrade: 1.0.3, 1.0.3.3 (haproxy)

RDAF Platform: From 3.7.2 to 8.0.0

OIA (AIOps) Application: From 7.7.2 to 8.0.0

RDAF Deployment rdaf CLI: From 1.3.2 to 1.4.0

RDAF Client rdac CLI: From 3.7.2 to 8.0.0

Python: From 3.7 to 3.12

1.1. Prerequisites

Before proceeding with this upgrade, please make sure and verify the below prerequisites are met.

Currently deployed CLI and RDAF services are running the below versions.

-

RDAF Deployment CLI version: 1.3.2

-

Infra Services tag: 1.0.3 / 1.0.3.3 (haproxy)

-

Platform Services and RDA Worker tag: 3.7.2

-

OIA Application Services tag: 7.7.2

-

Python Version: 3.7.4

-

CloudFabrix recommends taking VMware VM snapshots where RDA Fabric infra/platform/applications are deployed

Important

-

The Webhook URL is currently configured with port 7443 and should be updated to port 443. Below are the steps to update Webhook URL:

-

Login to UI → Click on Administration → Organization → click on Configure → click on Alert Endpoints → click on required Endpoint and edit to update the port

-

Any pipelines or external target sources using port 7443 will also need to be updated to port 443.

Note

- Check the Disk space of all the Platform and Service Vm's using the below mentioned command, the highlighted disk size should be less than 80%

rdauser@oia-125-216:~/collab-3.7-upgrade$ df -kh

Filesystem Size Used Avail Use% Mounted on

udev 32G 0 32G 0% /dev

tmpfs 6.3G 357M 6.0G 6% /run

/dev/mapper/ubuntu--vg-ubuntu--lv 48G 12G 34G 26% /

tmpfs 32G 0 32G 0% /dev/shm

tmpfs 5.0M 0 5.0M 0% /run/lock

tmpfs 32G 0 32G 0% /sys/fs/cgroup

/dev/loop0 64M 64M 0 100% /snap/core20/2318

/dev/loop2 92M 92M 0 100% /snap/lxd/24061

/dev/sda2 1.5G 309M 1.1G 23% /boot

/dev/sdf 50G 3.8G 47G 8% /var/mysql

/dev/loop3 39M 39M 0 100% /snap/snapd/21759

/dev/sdg 50G 541M 50G 2% /minio-data

/dev/loop4 92M 92M 0 100% /snap/lxd/29619

/dev/loop5 39M 39M 0 100% /snap/snapd/21465

/dev/sde 15G 140M 15G 1% /zookeeper

/dev/sdd 30G 884M 30G 3% /kafka-logs

/dev/sdc 50G 3.3G 47G 7% /opt

/dev/sdb 50G 29G 22G 57% /var/lib/docker

/dev/sdi 25G 294M 25G 2% /graphdb

/dev/sdh 50G 34G 17G 68% /opensearch

/dev/loop6 64M 64M 0 100% /snap/core20/2379

- Check all MariaDB nodes are sync on HA setup using below commands before start upgrade

Tip

Please run the below commands on the VM host where RDAF deployment CLI was installed and rdafk8s setup command was run. The mariadb configuration is read from /opt/rdaf/rdaf.cfg file.

MARIADB_HOST=`cat /opt/rdaf/rdaf.cfg | grep -A3 mariadb | grep datadir | awk '{print $3}' | cut -f1 -d'/'`

MARIADB_USER=`cat /opt/rdaf/rdaf.cfg | grep -A3 mariadb | grep user | awk '{print $3}' | base64 -d`

MARIADB_PASSWORD=`cat /opt/rdaf/rdaf.cfg | grep -A3 mariadb | grep password | awk '{print $3}' | base64 -d`

mysql -u$MARIADB_USER -p$MARIADB_PASSWORD -h $MARIADB_HOST -P3307 -e "show status like 'wsrep_local_state_comment';"

Please verify that the mariadb cluster state is in Synced state.

+---------------------------+--------+

| Variable_name | Value |

+---------------------------+--------+

| wsrep_local_state_comment | Synced |

+---------------------------+--------+

Please run the below command and verify that the mariadb cluster size is 3.

mysql -u$MARIADB_USER -p$MARIADB_PASSWORD -h $MARIADB_HOST -P3307 -e "SHOW GLOBAL STATUS LIKE 'wsrep_cluster_size'";

+--------------------+-------+

| Variable_name | Value |

+--------------------+-------+

| wsrep_cluster_size | 3 |

+--------------------+-------+

Warning

Make sure all of the above pre-requisites are met before proceeding with the upgrade process.

Warning

Kubernetes: Though Kubernetes based RDA Fabric deployment supports zero downtime upgrade, it is recommended to schedule a maintenance window for upgrading RDAF Platform and AIOps services to newer version.

Important

Please make sure full backup of the RDAF platform system is completed before performing the upgrade.

Kubernetes: Please run the below backup command to take the backup of application data.

Run the below command on RDAF Management system and make sure the Kubernetes PODs are NOT in restarting mode (it is applicable to only Kubernetes environment)

- Verify that RDAF deployment

rdafcli version is 1.3.2 on the VM where CLI was installed for docker on-prem registry managing Kubernetes or Non-kubernetes deployments.

- On-premise docker registry service version is 1.0.3

ff6b1de8515f cfxregistry.CloudFabrix.io:443/docker-registry:1.0.3 "/entrypoint.sh /bin…" 7 days ago Up 7 days deployment-scripts-docker-registry-1

-

RDAF Infrastructure services version is 1.0.3 except for below services.

-

rda-minio: version is

RELEASE.2023-09-30T07-02-29Z -

haproxy: version is 1.0.3.3

Run the below command to get rdafk8s Infra service details

- RDAF Platform services version is 3.7.2.x

Run the below command to get RDAF Platform services details

- RDAF OIA Application services version is 7.7.2.x

Run the below command to get RDAF App services details

-

RDAF Deployment CLI version: 1.3.2

-

Infra Services tag: 1.0.3 / 1.0.3.3 (haproxy)

-

Platform Services and RDA Worker tag: 3.7.2

-

OIA Application Services tag: 7.7.2

-

Python Version: 3.7.4

-

CloudFabrix recommends taking VMware VM snapshots where RDA Fabric infra/platform/applications are deployed

Important

-

The Webhook URL is currently configured with port 7443 and should be updated to port 443. Below are the steps to update Webhook URL:

-

Login to UI → Click on Administration → Organization → click on Configure → click on Alert Endpoints → click on required Endpoint and edit to update the port

-

Any pipelines or external target sources using port 7443 will also need to be updated to port 443.

Note

- Check the Disk space of all the Platform and Service Vm's using the below mentioned command, the highlighted disk size should be less than 80%

rdauser@oia-125-216:~/collab-3.7-upgrade$ df -kh

Filesystem Size Used Avail Use% Mounted on

udev 32G 0 32G 0% /dev

tmpfs 6.3G 357M 6.0G 6% /run

/dev/mapper/ubuntu--vg-ubuntu--lv 48G 12G 34G 26% /

tmpfs 32G 0 32G 0% /dev/shm

tmpfs 5.0M 0 5.0M 0% /run/lock

tmpfs 32G 0 32G 0% /sys/fs/cgroup

/dev/loop0 64M 64M 0 100% /snap/core20/2318

/dev/loop2 92M 92M 0 100% /snap/lxd/24061

/dev/sda2 1.5G 309M 1.1G 23% /boot

/dev/sdf 50G 3.8G 47G 8% /var/mysql

/dev/loop3 39M 39M 0 100% /snap/snapd/21759

/dev/sdg 50G 541M 50G 2% /minio-data

/dev/loop4 92M 92M 0 100% /snap/lxd/29619

/dev/loop5 39M 39M 0 100% /snap/snapd/21465

/dev/sde 15G 140M 15G 1% /zookeeper

/dev/sdd 30G 884M 30G 3% /kafka-logs

/dev/sdc 50G 3.3G 47G 7% /opt

/dev/sdb 50G 29G 22G 57% /var/lib/docker

/dev/sdi 25G 294M 25G 2% /graphdb

/dev/sdh 50G 34G 17G 68% /opensearch

/dev/loop6 64M 64M 0 100% /snap/core20/2379

Warning

Make sure all of the above pre-requisites are met before proceeding with the upgrade process.

Warning

Non-Kubernetes: Upgrading RDAF Platform and AIOps application services is a disruptive operation. Schedule a maintenance window before upgrading RDAF Platform and AIOps services to newer version.

Important

Please make sure full backup of the RDAF platform system is completed before performing the upgrade.

Non-Kubernetes: Please run the below backup command to take the backup of application data.

Note: Please make sure this backup-dir is mounted across all infra,cli vms.- Verify that RDAF deployment

rdafcli version is 1.3.2 on the VM where CLI was installed for docker on-prem registry managing Kubernetes or Non-kubernetes deployments.

- On-premise docker registry service version is 1.0.3

ff6b1de8515f cfxregistry.CloudFabrix.io:443/docker-registry:1.0.3 "/entrypoint.sh /bin…" 7 days ago Up 7 days deployment-scripts-docker-registry-1

-

RDAF Infrastructure services version is 1.0.3 except for below services.

-

rda-minio: version is

RELEASE.2023-09-30T07-02-29Z -

haproxy: version is

1.0.3.3

Run the below command to get RDAF Infra service details

- RDAF Platform services version is 3.7.2

Run the below command to get RDAF Platform services details

- RDAF OIA Application services version is 7.7.2

Run the below command to get RDAF App services details

Warning

Before starting the upgrade of the RDAF platform's version to 8.0.0 release, please complete the below 2 steps which are mandatory.

-

Before starting the upgrade of RDAF CLI from version 1.3.2 to 1.4.0. These steps are mandatory and only applicable if the CFX RDAF AIOps (OIA) application services are installed.

-

Upgrade python version from 3.7 to 3.12

1.1.1 Upgrade Python from 3.7 to 3.12

Please refer Python Upgrade Guide From Python 3.7 to Python 3.12

RDAF Deployment CLI Upgrade:

Please follow the below given steps.

Note

Upgrade RDAF Deployment CLI on both on-premise docker registry VM and RDAF Platform's management VM if provisioned separately.

Login into the VM where rdaf deployment CLI was installed for docker on-premise registry and managing Kubernetes or Non-kubernetes deployment.

- Download the RDAF Deployment CLI's newer version 1.4.0 bundle.

wget https://macaw-amer.s3.us-east-1.amazonaws.com/releases/rdaf-platform/1.4.0/rdafcli-1.4.0.tar.gz

- Upgrade the

rdafk8sCLI to version 1.4.0

- Verify the installed

rdafk8sCLI version is upgraded to 1.4.0

- Download the RDAF Deployment CLI's newer version 1.4.0 bundle and copy it to RDAF CLI management VM on which

rdafdeployment CLI was installed.

- Download the RDAF Deployment CLI's newer version 1.4.0 bundle

wget https://macaw-amer.s3.us-east-1.amazonaws.com/releases/rdaf-platform/1.4.0/rdafcli-1.4.0.tar.gz

- Upgrade the

rdafCLI to version 1.4.0

- Verify the installed

rdafCLI version is upgraded to 1.4.0

- Download the RDAF Deployment CLI's newer version 1.4.0 bundle and copy it to RDAF management VM on which

rdaf & rdafk8sdeployment CLI was installed.

1.2. Upgrade Steps

1.2.1 Upgrade On-Prem Registry

Please download the below python script (rdaf_upgrade_132_140.py)

wget https://macaw-amer.s3.us-east-1.amazonaws.com/releases/rdaf-platform/1.4.0/rdaf_upgrade_132_140.py

The below step will generate values.yaml.latest files for all RDAF Infrastructure, Platform and Application services in the /opt/rdaf/deployment-scripts directory.

Please run the downloaded python upgrade script rdaf_upgrade_132_140.py as shown below

Note

The above command will show the available options for the upgrade script

usage: rdaf_upgrade_132_140.py [-h] {registry_upgrade,upgrade,migrate_dataset} ...

options:

-h, --help show this help message and exit

options:

{registry_upgrade,upgrade,migrate_dataset}

Available options

registry_upgrade Upgrade on prem registry if any

upgrade upgrade the existing setup

migrate_dataset Migrate dataset using script

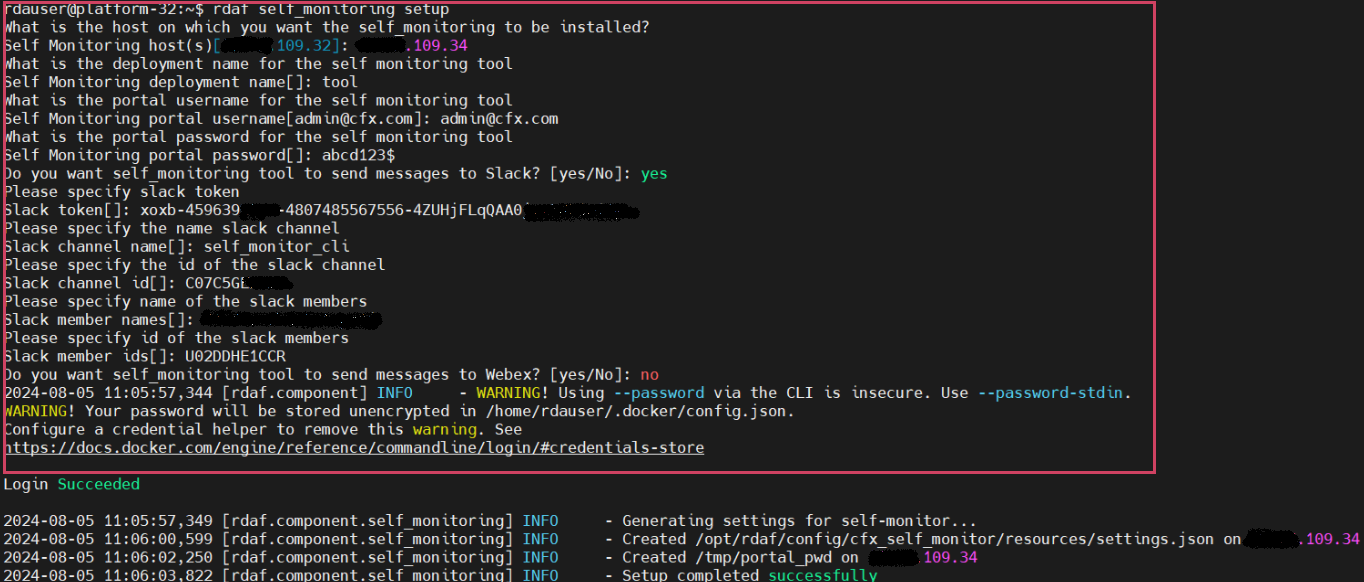

- If there is already an on-premises registry, upgrade it with the command below.

Creating backup rdaf-registry.cfg

Login Succeeded

WARNING! Using --password via the CLI is insecure. Use --password-stdin.

WARNING! Your password will be stored unencrypted in /home/rdauser/.docker/config.json.

Configure a credential helper to remove this warning. See

https://docs.docker.com/engine/reference/commandline/login/#credentials-store

Updating docker-compose binary...

Please download the below python script (rdaf_upgrade_132_140.py)

wget https://macaw-amer.s3.us-east-1.amazonaws.com/releases/rdaf-platform/1.4.0/rdaf_upgrade_132_140.py

The below step will generate values.yaml.latest files for all RDAF Infrastructure, Platform and Application services in the /opt/rdaf/deployment-scripts directory.

Please run the downloaded python upgrade script rdaf_upgrade_132_140.py as shown below

Note

The above command will show the available options for the upgrade script

usage: rdaf_upgrade_132_140.py [-h] {registry_upgrade,upgrade,migrate_dataset} ...

options:

-h, --help show this help message and exit

options:

{registry_upgrade,upgrade,migrate_dataset}

Available options

registry_upgrade Upgrade on prem registry if any

upgrade upgrade the existing setup

migrate_dataset Migrate dataset using script

- If there is already an on-premises registry, upgrade it with the command below.

Creating backup rdaf-registry.cfg

Login Succeeded

WARNING! Using --password via the CLI is insecure. Use --password-stdin.

WARNING! Your password will be stored unencrypted in /home/rdauser/.docker/config.json.

Configure a credential helper to remove this warning. See

https://docs.docker.com/engine/reference/commandline/login/#credentials-store

Updating docker-compose binary...

1.2.2 Download the new Docker Images

Download the new docker image tags for RDAF Platform and OIA (AIOps) Application services and wait until all of the images are downloaded.

Note

If the Download of the images fail, Please re-execute the above command

Run the below command to verify above mentioned tags are downloaded for all of the RDAF Platform and OIA (AIOps) Application services.

Please make sure 1.0.3.1 Image tag is downloaded for the telegraph service

- rda-platform-telegraf

Please make sure 8.0.0 image tag is downloaded for the below RDAF Platform services.

- rda-client-api-server

- rda-registry

- rda-scheduler

- rda-collector

- rda-identity

- rda-fsm

- rda-asm

- rda-stack-mgr

- rda-access-manager

- rda-resource-manager

- rda-user-preferences

- onprem-portal

- onprem-portal-nginx

- rda-worker-all

- onprem-portal-dbinit

- cfxdx-nb-nginx-all

- rda-event-gateway

- rda-chat-helper

- rdac

- rdac-full

- cfxcollector

- bulk_stats

Please make sure 8.0.0 image tag is downloaded for the below RDAF OIA (AIOps) Application services.

- rda-app-controller

- rda-alert-processor

- rda-file-browser

- rda-smtp-server

- rda-ingestion-tracker

- rda-reports-registry

- rda-ml-config

- rda-event-consumer

- rda-webhook-server

- rda-irm-service

- rda-alert-ingester

- rda-collaboration

- rda-notification-service

- rda-configuration-service

- rda-alert-processor-companion

Downloaded Docker images are stored under the below path.

/opt/rdaf-registry/data/docker/registry/v2/ or /opt/rdaf/data/docker/registry/v2/

Run the below command to check the filesystem's disk usage on offline registry VM where docker images are pulled.

If necessary, older image tags that are no longer in use can be deleted to free up disk space using the command below.

Note

Run the command below if /opt occupies more than 80% of the disk space or if the free capacity of /opt is less than 25GB.

Important

Before setting up graphDB make sure graphDB isn't already installed, if its already installed please Ignore the below steps

If the /opt/rdaf/rdaf.cfg file contains only GraphDB configuration entries, and the GraphDB service is either not running or the GraphDB mount point is not mounted, then remove the GraphDB entries from /opt/rdaf/rdaf.cfg.

Warning

For GraphDB installation, an additional disk must be provisioned on the RDA Fabric Infrastructure VMs. Click Here to perform this action

It is a pre-requisite and this step need to be completed before installing the GraphDB service.

- Please run the below python script to setup GraphDB

Note

1.Please take a backup of /opt/rdaf/deployment-scripts/values.yaml and /opt/rdaf/config/network_config/config.json

wget https://macaw-amer.s3.amazonaws.com/releases/rdaf-platform/1.2.2/rdaf_upgrade_120_121_to_122.py

It will ask for the IPs to set the GraphDB configs

If it is a cluster setup please provide all 3 infra IPs with comma separated. If it is a stand-alone setup please provide the IP of Infra VM.

Once provided the IP Address it will ask for the username and password, please enter the username and password and make a note of them for future usage.

- Install the GraphDB service using below command

1.2.3 Upgrade RDAF Infra Services

Please download the below python script (rdaf_upgrade_132_to_140.py)

wget https://macaw-amer.s3.us-east-1.amazonaws.com/releases/rdaf-platform/1.4.0/rdaf_upgrade_132_140.py

Please run the downloaded python upgrade script rdaf_upgrade_132_140.py as shown below.

Updating docker compose binary..

updating docker binary on 192.168.133.61

updating docker binary on 192.168.133.65

updating docker binary on 192.168.133.64

updating docker binary on 192.168.133.63

updating docker binary on 192.168.133.62

updating docker binary on 192.168.133.66

updating docker binary on 192.168.133.60

Creating backup of existing haproxy.cfg on host 192.168.133.60

Updating haproxy configs on host 192.168.133.60..

Creating backup of existing haproxy.cfg on host 192.168.133.61

Updating haproxy configs on host 192.168.133.61..

Copied /opt/rdaf/deployment-scripts/platform.yaml to /opt/rdaf/deployment-scripts/192.168.133.63

Copied /opt/rdaf/deployment-scripts/platform.yaml to /opt/rdaf/deployment-scripts/192.168.133.64

backing up existing values.yaml..

Updating the opensearch policy user permissions...

{"status":"OK","message":"'role-fb8449cfd307447fbd02e70c34c2ccc3-dataplane-policy' updated."}

- Upgrade haproxy service using below command.

- Please use the below mentioned command to see haproxy is up and in Running state

+--------------------+----------------+---------------+--------------+--------------------+

| Name | Host | Status | Container Id | Tag |

+--------------------+----------------+---------------+--------------+--------------------+

| haproxy | 192.168.108.13 | Up 29 hours | ed35bcfb0fa2 | 1.0.3.3 |

| haproxy | 192.168.108.14 | Up 29 hours | 578e366b280e | 1.0.3.3 |

| keepalived | 192.168.108.13 | active | N/A | N/A |

| keepalived | 192.168.108.14 | active | N/A | N/A |

| rda-nats | 192.168.108.13 | Up 1 Days ago | 0083a214d582 | 1.0.3 |

| rda-nats | 192.168.108.14 | Up 1 Days ago | 37aee17fa16e | 1.0.3 |

| rda-minio | 192.168.108.13 | Up 1 Days ago | c9732a779a20 | RELEASE.2023-09-30 |

| | | | | T07-02-29Z |

......

......

......

| rda-graphdb[agent] | 192.168.108.13 | Up 1 Days ago | 49153a8d2044 | 1.0.3 |

| rda-graphdb[coordi | 192.168.108.13 | Up 1 Days ago | 9e25838e7c35 | 1.0.3 |

| nator] | | | | |

| rda- | 192.168.108.13 | Up 1 Days ago | 646b32b07e14 | 1.0.3 |

| graphdb[server] | | | | |

| rda- | 192.168.108.14 | Up 1 Days ago | 7e64251897b3 | 1.0.3 |

| graphdb[operator] | | | | |

| rda-graphdb[agent] | 192.168.108.14 | Up 1 Days ago | babda2808d56 | 1.0.3 |

| rda-graphdb[coordi | 192.168.108.14 | Up 1 Days ago | f967c810a039 | 1.0.3 |

| nator] | | | | |

| rda- | 192.168.108.14 | Up 1 Days ago | cacfb6f9aa55 | 1.0.3 |

| graphdb[server] | | | | |

| rda- | 192.168.108.16 | Up 1 Days ago | 6dc86979daa8 | 1.0.3 |

| graphdb[operator] | | | | |

| rda-graphdb[agent] | 192.168.108.16 | Up 1 Days ago | f25a254e9c50 | 1.0.3 |

| rda-graphdb[coordi | 192.168.108.16 | Up 1 Days ago | d8c6a4b5143c | 1.0.3 |

| nator] | | | | |

| rda- | 192.168.108.16 | Up 1 Days ago | 9ab70685761d | 1.0.3 |

| graphdb[server] | | | | |

+--------------------+----------------+---------------+--------------+--------------------+

Please download the below python script (rdaf_upgrade_132_to_140.py)

wget https://macaw-amer.s3.us-east-1.amazonaws.com/releases/rdaf-platform/1.4.0/rdaf_upgrade_132_140.py

Please run the downloaded python upgrade script rdaf_upgrade_132_140.py as shown below

Updating docker compose binary..

updating docker binary on 192.168.133.61

updating docker binary on 192.168.133.65

updating docker binary on 192.168.133.64

updating docker binary on 192.168.133.63

updating docker binary on 192.168.133.62

updating docker binary on 192.168.133.66

updating docker binary on 192.168.133.60

Creating backup of existing haproxy.cfg on host 192.168.133.60

Updating haproxy configs on host 192.168.133.60..

Creating backup of existing haproxy.cfg on host 192.168.133.61

Updating haproxy configs on host 192.168.133.61..

Copied /opt/rdaf/deployment-scripts/platform.yaml to /opt/rdaf/deployment-scripts/192.168.133.63

Copied /opt/rdaf/deployment-scripts/platform.yaml to /opt/rdaf/deployment-scripts/192.168.133.64

backing up existing values.yaml..

Updating the opensearch policy user permissions...

{"status":"OK","message":"'role-fb8449cfd307447fbd02e70c34c2ccc3-dataplane-policy' updated."}

- Upgrade haproxy service using below command. Although the tag provided is the same as the existing one,

- Please use the below mentioned command to see haproxy is up and in Running state

+----------------------+-----------------+---------------+--------------+------------------------------+

| Name | Host | Status | Container Id | Tag |

+----------------------+-----------------+---------------+--------------+------------------------------+

| haproxy | 192.168.109.133 | Up 32 minutes | 29308dbe39f8 | 1.0.3.3 |

| haproxy | 192.168.109.134 | Up 32 minutes | 5f046222c558 | 1.0.3.3 |

| keepalived | 192.168.109.133 | active | N/A | N/A |

| keepalived | 192.168.109.134 | active | N/A | N/A |

| nats | 192.168.109.133 | Up 54 minutes | 472ee5e367a5 | 1.0.3 |

| nats | 192.168.109.134 | Up 54 minutes | 236bb295a6c4 | 1.0.3 |

| minio | 192.168.109.133 | Up 54 minutes | a367a1e55ad4 | RELEASE.2023-09-30T07-02-29Z |

| minio | 192.168.109.134 | Up 54 minutes | 52ee1df694bc | RELEASE.2023-09-30T07-02-29Z |

| minio | 192.168.109.135 | Up 54 minutes | efe9e178ecbf | RELEASE.2023-09-30T07-02-29Z |

| minio | 192.168.109.136 | Up 54 minutes | b5e93605edae | RELEASE.2023-09-30T07-02-29Z |

| mariadb | 192.168.109.133 | Up 54 minutes | 798215f1a1e4 | 1.0.3 |

+----------------------+-----------------+---------------+--------------+------------------------------+

Run the below RDAF command to check infra healthcheck status

+------------+-----------------+--------+-----------------+----------------+--------------+

| Name | Check | Status | Reason | Host | Container Id |

+------------+-----------------+--------+-----------------+----------------+--------------+

| haproxy | Port Connection | OK | N/A | 192.168.109.50 | 4e837328abf4 |

| haproxy | Service Status | OK | N/A | 192.168.109.50 | 4e837328abf4 |

| haproxy | Firewall Port | OK | N/A | 192.168.109.50 | 4e837328abf4 |

| haproxy | Port Connection | OK | N/A | 192.168.109.51 | 0a3e98914afb |

| haproxy | Service Status | OK | N/A | 192.168.109.51 | 0a3e98914afb |

| haproxy | Firewall Port | OK | N/A | 192.168.109.51 | 0a3e98914afb |

| keepalived | Service Status | OK | N/A | 192.168.109.50 | N/A |

| keepalived | Service Status | OK | N/A | 192.168.109.51 | N/A |

| nats | Port Connection | OK | N/A | 192.168.109.50 | 35e28aede79d |

| nats | Service Status | OK | N/A | 192.168.109.50 | 35e28aede79d |

| nats | Firewall Port | OK | N/A | 192.168.109.50 | 35e28aede79d |

| nats | Port Connection | OK | N/A | 192.168.109.51 | 5d7d10b891fb |

| nats | Service Status | OK | N/A | 192.168.109.51 | 5d7d10b891fb |

| nats | Firewall Port | OK | N/A | 192.168.109.51 | 5d7d10b891fb |

| minio | Port Connection | OK | N/A | 192.168.109.50 | 484e3b08ede7 |

| minio | Service Status | OK | N/A | 192.168.109.50 | 484e3b08ede7 |

| minio | Firewall Port | OK | N/A | 192.168.109.50 | 484e3b08ede7 |

| minio | Port Connection | OK | N/A | 192.168.109.51 | f9145bdaccd9 |

| minio | Service Status | OK | N/A | 192.168.109.51 | f9145bdaccd9 |

| minio | Firewall Port | OK | N/A | 192.168.109.51 | f9145bdaccd9 |

| graphdb | Port Connection | OK | N/A | 192.168.109.50 | 97273ce35c07 |

| graphdb | Service Status | OK | N/A | 192.168.109.50 | 97273ce35c07 |

| graphdb | Firewall Port | OK | N/A | 192.168.109.50 | 97273ce35c07 |

| graphdb | Port Connection | OK | N/A | 192.168.109.51 | f5d57a7f70f1 |

| graphdb | Service Status | OK | N/A | 192.168.109.51 | f5d57a7f70f1 |

| graphdb | Firewall Port | OK | N/A | 192.168.109.51 | f5d57a7f70f1 |

| graphdb | Port Connection | OK | N/A | 192.168.109.52 | 73cbfe740aae |

| graphdb | Service Status | OK | N/A | 192.168.109.52 | 73cbfe740aae |

| graphdb | Firewall Port | OK | N/A | 192.168.109.52 | 73cbfe740aae |

+------------+-----------------+--------+-----------------+----------------+--------------+

1.2.4 Upgrade RDAF Platform Services

Step-1: Run the below command to initiate upgrading RDAF Platform services.

As the upgrade procedure is a non-disruptive upgrade, it puts the currently running PODs into Terminating state and newer version PODs into Pending state.

Step-2: Run the below command to check the status of the existing and newer PODs and make sure atleast one instance of each Platform service is in Terminating state.

Step-3: Run the below command to put all Terminating RDAF platform service PODs into maintenance mode. It will list all of the POD Ids of platform services along with rdac maintenance command that required to be put in maintenance mode.

Note

If maint_command.py script doesn't exist on RDAF deployment CLI VM, it can be downloaded using the below command.

Step-4: Copy & Paste the rdac maintenance command as below.

Step-5: Run the below command to verify the maintenance mode status of the RDAF platform services.

Step-6: Run the below command to delete the Terminating RDAF platform service PODs

for i in `kubectl get pods -n rda-fabric -l app_category=rdaf-platform | grep 'Terminating' | awk '{print $1}'`; do kubectl delete pod $i -n rda-fabric --force; done

Note

Wait for 120 seconds and Repeat above steps from Step-2 to Step-6 for rest of the RDAF Platform service PODs.

Please wait till all of the new platform service PODs are in Running state and run the below command to verify their status and make sure all of them are running with 8.0.0 version.

+--------------------+----------------+-------------------+--------------+-------+

| Name | Host | Status | Container Id | Tag |

+--------------------+----------------+-------------------+--------------+-------+

| rda-api-server | 192.168.131.44 | Up 44 Minutes ago | 805af438ecd7 | 8.0.0 |

| rda-api-server | 192.168.131.45 | Up 46 Minutes ago | 6e5a3c39bff7 | 8.0.0 |

| rda-registry | 192.168.131.45 | Up 46 Minutes ago | 74495e4433b3 | 8.0.0 |

| rda-registry | 192.168.131.44 | Up 46 Minutes ago | 33fe895b8f77 | 8.0.0 |

| rda-identity | 192.168.131.44 | Up 46 Minutes ago | 0be64a850f29 | 8.0.0 |

| rda-identity | 192.168.131.47 | Up 45 Minutes ago | cc236ec05df8 | 8.0.0 |

| rda-fsm | 192.168.131.44 | Up 46 Minutes ago | 57a58b24e479 | 8.0.0 |

| rda-fsm | 192.168.131.45 | Up 46 Minutes ago | d107bbf2f1ee | 8.0.0 |

| rda-asm | 192.168.131.44 | Up 46 Minutes ago | d1a10d8d2b5f | 8.0.0 |

| rda-asm | 192.168.131.45 | Up 46 Minutes ago | 723e48495a05 | 8.0.0 |

| rda-asm | 192.168.131.47 | Up 2 Weeks ago | 130b6c27f794 | 8.0.0 |

| rda-asm | 192.168.131.46 | Up 2 Weeks ago | 7dab62106a42 | 8.0.0 |

| rda-chat-helper | 192.168.131.44 | Up 46 Minutes ago | 4967ce0a0c70 | 8.0.0 |

| rda-chat-helper | 192.168.131.45 | Up 45 Minutes ago | 5bf0c0d2ce0c | 8.0.0 |

| rda-access-manager | 192.168.131.45 | Up 46 Minutes ago | 39e325e5307b | 8.0.0 |

| rda-access-manager | 192.168.131.46 | Up 45 Minutes ago | 166498466a71 | 8.0.0 |

| rda-resource- | 192.168.131.44 | Up 45 Minutes ago | 9758013b204f | 8.0.0 |

| manager | | | | |

| rda-resource- | 192.168.131.45 | Up 45 Minutes ago | e62ee21f5ea2 | 8.0.0 |

| manager | | | | |

+--------------------+----------------+-------------------+--------------+-------+

Run the below command to check the rda-scheduler service is elected as a leader under Site column.

+-------+----------------------------------------+-------------+--------------+----------+-------------+-----------------+--------+--------------+---------------+--------------+

| Cat | Pod-Type | Pod-Ready | Host | ID | Site | Age | CPUs | Memory(GB) | Active Jobs | Total Jobs |

|-------+----------------------------------------+-------------+--------------+----------+-------------+-----------------+--------+--------------+---------------+--------------|

| Infra | api-server | True | rda-api-server | 9c0484af | | 11:41:50 | 8 | 31.33 | | |

| Infra | api-server | True | rda-api-server | 196558ed | | 11:40:23 | 8 | 31.33 | | |

| Infra | asm | True | rda-asm-5b8fb9 | bcbdaae5 | | 11:42:26 | 8 | 31.33 | | |

| Infra | asm | True | rda-asm-5b8fb9 | 232a58af | | 11:42:40 | 8 | 31.33 | | |

| Infra | collector | True | rda-collector- | d06fb56c | | 11:42:03 | 8 | 31.33 | | |

| Infra | collector | True | rda-collector- | a4c79e4c | | 11:41:59 | 8 | 31.33 | | |

| Infra | registry | True | rda-registry-6 | 2fd69950 | | 11:42:03 | 8 | 31.33 | | |

| Infra | registry | True | rda-registry-6 | fac544d6 | | 11:41:59 | 8 | 31.33 | | |

| Infra | scheduler | True | rda-scheduler- | b98afe88 | *leader* | 11:42:01 | 8 | 31.33 | | |

| Infra | scheduler | True | rda-scheduler- | e25a0841 | | 11:41:56 | 8 | 31.33 | | |

| Infra | worker | True | rda-worker-5b5 | 99bd054e | rda-site-01 | 11:33:40 | 8 | 31.33 | 0 | 0 |

| Infra | worker | True | rda-worker-5b5 | 0bfdcd98 | rda-site-01 | 11:33:34 | 8 | 31.33 | 0 | 0 |

+-------+----------------------------------------+-------------+----------------+----------+-------------+----------+--------+--------------+---------------+--------------+

Run the below command to check if all services has ok status and does not throw any failure messages.

Warning

For Non-Kubernetes deployment, upgrading RDAF Platform and AIOps application services is a disruptive operation when rolling-upgrade option is not used. Please schedule a maintenance window before upgrading RDAF Platform and AIOps services to newer version.

Run the below command to initiate upgrading RDAF Platform services with zero downtime

Note

timeout <10> mentioned in the above command represents as Seconds

Note

The rolling-upgrade option upgrades the Platform services running in high-availability mode on one VM at a time in sequence. It completes the upgrade of Platform services running on VM-1 before upgrading them on VM-2, followed by VM-3, and so on.

During this upgrade sequence, RDAF platform continues to function without any impact to the application traffic.

After completing the Platform services upgrade on all VMs, it will ask for user confirmation to delete the older version Platform service PODs. The user has to provide YES to delete the old docker containers (in non-k8s)

192.168.133.95:5000/onprem-portal-nginx:3.7.2

2024-08-12 02:21:58,875 [rdaf.component.platform] INFO - Gathering platform container details.

2024-08-12 02:22:01,326 [rdaf.component.platform] INFO - Gathering rdac pod details.

+----------+----------------------+---------+---------+--------------+-------------+------------+

| Pod ID | Pod Type | Version | Age | Hostname | Maintenance | Pod Status |

+----------+----------------------+---------+---------+--------------+-------------+------------+

| 3a5ff878 | api-server | 3.7.2.1 | 2:34:09 | 5119921f9c1c | None | True |

| 689c2574 | registry | 3.7.2 | 3:23:10 | d21676c0465b | None | True |

| 0d03f649 | scheduler | 3.7.2.1 | 2:34:46 | dd699a1d15af | None | True |

| 0496910a | collector | 3.7.2 | 3:22:40 | 1c367e3bf00a | None | True |

| c4a88eb7 | asset-dependency | 3.7.2 | 3:22:25 | cdb3f4c76deb | None | True |

| 9562960a | authenticator | 3.7.2 | 3:22:09 | 8bda6c86a264 | None | True |

| ae8b58e5 | asm | 3.7.2 | 3:21:54 | 8f0f7f773907 | None | True |

| 1cea350e | fsm | 3.7.2 | 3:21:37 | 1ea1f5794abb | None | True |

| 32fa2f93 | chat-helper | 3.7.2 | 3:21:23 | 811cbcfba7a2 | None | True |

| 0e6f375c | cfxdimensions-app- | 3.7.2 | 3:21:07 | 307c140f99c2 | None | True |

| | access-manager | | | | | |

| 4130b2d4 | cfxdimensions-app- | 3.7.2.2 | 2:24:23 | 2d73c36426fe | None | True |

| | resource-manager | | | | | |

| 29caf947 | user-preferences | 3.7.2 | 3:20:36 | 3e2b5b7e6cb4 | None | True |

+----------+----------------------+---------+---------+--------------+-------------+------------+

Continue moving above pods to maintenance mode? [yes/no]: yes

2024-08-12 02:23:04,389 [rdaf.component.platform] INFO - Initiating Maintenance Mode...

2024-08-12 02:23:10,048 [rdaf.component.platform] INFO - Following container are in maintenance mode

+----------+----------------------+---------+---------+--------------+-------------+------------+

| Pod ID | Pod Type | Version | Age | Hostname | Maintenance | Pod Status |

+----------+----------------------+---------+---------+--------------+-------------+------------+

| 3a5ff878 | api-server | 3.7.2.1 | 2:34:49 | 5119921f9c1c | maintenance | False |

| ae8b58e5 | asm | 3.7.2 | 3:22:34 | 8f0f7f773907 | maintenance | False |

| c4a88eb7 | asset-dependency | 3.7.2 | 3:23:05 | cdb3f4c76deb | maintenance | False |

| 9562960a | authenticator | 3.7.2 | 3:22:49 | 8bda6c86a264 | maintenance | False |

| 0e6f375c | cfxdimensions-app- | 3.7.2 | 3:21:47 | 307c140f99c2 | maintenance | False |

| | access-manager | | | | | |

| 4130b2d4 | cfxdimensions-app- | 3.4.2.2 | 2:25:03 | 2d73c36426fe | maintenance | False |

| | resource-manager | | | | | |

| 32fa2f93 | chat-helper | 3.7.2 | 3:22:03 | 811cbcfba7a2 | maintenance | False |

| 0496910a | collector | 3.7.2 | 3:23:20 | 1c367e3bf00a | maintenance | False |

| 1cea350e | fsm | 3.7.2 | 3:22:17 | 1ea1f5794abb | maintenance | False |

| 689c2574 | registry | 3.7.2 | 3:23:50 | d21676c0465b | maintenance | False |

| 0d03f649 | scheduler | 3.7.2.1 | 2:35:26 | dd699a1d15af | maintenance | False |

| 29caf947 | user-preferences | 3.7.2 | 3:21:16 | 3e2b5b7e6cb4 | maintenance | False |

+----------+----------------------+---------+---------+--------------+-------------+------------+

2024-08-12 02:23:10,052 [rdaf.component.platform] INFO - Waiting for timeout of 5 seconds...

2024-08-12 02:23:15,060 [rdaf.component.platform] INFO - Upgrading service: rda_api_server on host 192.168.133.92

Run the below command to initiate upgrading RDAF Platform services without zero downtime

Please wait till all of the new platform services are in Up state and run the below command to verify their status and make sure all of them are running with 3.7 version.

+--------------------+----------------+-----------+--------------+-------+

| Name | Host | Status | Container Id | Tag |

+--------------------+----------------+-----------+--------------+-------+

| rda_api_server | 192.168.109.50 | Up 3 days | 27cbedd50b24 | 8.0.0 |

| rda_api_server | 192.168.109.51 | Up 3 days | abcb3c513104 | 8.0.0 |

| rda_registry | 192.168.109.50 | Up 3 days | 14202e1a8b33 | 8.0.0 |

| rda_registry | 192.168.109.51 | Up 3 days | 5cace600c998 | 8.0.0 |

| rda_scheduler | 192.168.109.50 | Up 3 days | 50818a86d5ea | 8.0.0 |

| rda_scheduler | 192.168.109.51 | Up 3 days | 4ec10e80e89a | 8.0.0 |

| rda_collector | 192.168.109.50 | Up 3 days | dbd377e500a2 | 8.0.0 |

| rda_collector | 192.168.109.51 | Up 3 days | 77e8b876c0fc | 8.0.0 |

| rda_asset_dependen | 192.168.109.50 | Up 3 days | 5978017174d3 | 8.0.0 |

| cy | | | | |

| rda_asset_dependen | 192.168.109.51 | Up 3 days | 2d135b6b477a | 8.0.0 |

| cy | | | | |

| rda_identity | 192.168.109.50 | Up 3 days | 1bf40b89e762 | 8.0.0 |

| rda_identity | 192.168.109.51 | Up 3 days | 08e6ad7e2a07 | 8.0.0 |

| rda_asm | 192.168.109.50 | Up 3 days | 2439297a8b50 | 8.0.0 |

| rda_asm | 192.168.109.51 | Up 3 days | 239b937097c0 | 8.0.0 |

| rda_fsm | 192.168.109.50 | Up 3 days | 45cd3f66cd24 | 8.0.0 |

| rda_fsm | 192.168.109.51 | Up 3 days | 2546ed50063a | 8.0.0 |

+--------------------+----------------+-----------+--------------+-------+

Run the below command to check the rda-scheduler service is elected as a leader under Site column.

Run the below command to check if all services has ok status and does not throw any failure messages.

+-----------+----------------------------------------+--------------+----------+-------------+-----------------------------------------------------+----------+-------------------------------------------------------------+

| Cat | Pod-Type | Host | ID | Site | Health Parameter | Status | Message |

|-----------+----------------------------------------+--------------+----------+-------------+-----------------------------------------------------+----------+-------------------------------------------------------------|

| rda_app | alert-ingester | 7f75047e9e44 | daa8c414 | | service-status | ok | |

| rda_app | alert-ingester | 7f75047e9e44 | daa8c414 | | minio-connectivity | ok | |

| rda_app | alert-ingester | 7f75047e9e44 | daa8c414 | | service-dependency:configuration-service | ok | 2 pod(s) found for configuration-service |

| rda_app | alert-ingester | 7f75047e9e44 | daa8c414 | | service-initialization-status | ok | |

| rda_app | alert-ingester | 7f75047e9e44 | daa8c414 | | kafka-connectivity | ok | Cluster=NTc1NWU1MTQxYmY3MTFlZg, Broker=1, Brokers=[1, 2, 3] |

| rda_app | alert-ingester | f9ec55862be0 | f9b9231c | | service-status | ok | |

| rda_app | alert-ingester | f9ec55862be0 | f9b9231c | | minio-connectivity | ok | |

| rda_app | alert-ingester | f9ec55862be0 | f9b9231c | | service-dependency:configuration-service | ok | 2 pod(s) found for configuration-service |

| rda_app | alert-ingester | f9ec55862be0 | f9b9231c | | service-initialization-status | ok | |

| rda_app | alert-ingester | f9ec55862be0 | f9b9231c | | kafka-connectivity | ok | Cluster=NTc1NWU1MTQxYmY3MTFlZg, Broker=3, Brokers=[1, 2, 3] |

| rda_app | alert-processor | c6cc7b04ab33 | b4ebfb06 | | service-status | ok | |

| rda_app | alert-processor | c6cc7b04ab33 | b4ebfb06 | | minio-connectivity | ok | |

+-----------+----------------------------------------+--------------+----------+-------------+-----------------------------------------------------+----------+-------------------------------------------------------------+

1.2.5 Upgrade rdac CLI

1.2.6 Upgrade RDA Worker Services

Note

If the worker was deployed in a HTTP proxy environment, please make sure the required HTTP proxy environment variables are added in /opt/rdaf/deployment-scripts/values.yaml file under rda_worker configuration section as shown below before upgrading RDA Worker services.

rda_worker:

terminationGracePeriodSeconds: 300

replicas: 6

sizeLimit: 1024Mi

privileged: true

resources:

requests:

memory: 100Mi

limits:

memory: 24Gi

env:

WORKER_GROUP: rda-prod-01

CAPACITY_FILTER: cpu_load1 <= 7.0 and mem_percent < 95

MAX_PROCESSES: '1000'

RDA_ENABLE_TRACES: 'no'

WORKER_PUBLIC_ACCESS: 'true'

DISABLE_REMOTE_LOGGING_CONTROL: 'no'

RDA_SELF_HEALTH_RESTART_AFTER_FAILURES: 3

extraEnvs:

- name: http_proxy

value: http://test:[email protected]:3128

- name: https_proxy

value: http://test:[email protected]:3128

- name: HTTP_PROXY

value: http://test:[email protected]:3128

- name: HTTPS_PROXY

value: http://test:[email protected]:3128

....

....

Step-1: Please run the below command to initiate upgrading the RDA Worker service PODs.

Step-2: Run the below command to check the status of the existing and newer PODs and make sure atleast one instance of each RDA Worker service POD is in Terminating state.

NAME READY STATUS RESTARTS AGE

rda-worker-77f459d5b9-9kdmg 1/1 Running 0 73m

rda-worker-77f459d5b9-htsmr 1/1 Running 0 74m

Step-3: Run the below command to put all Terminating RDAF worker service PODs into maintenance mode. It will list all of the POD Ids of RDA worker services along with rdac maintenance command that is required to be put in maintenance mode.

Step-4: Copy & Paste the rdac maintenance command as below.

Step-5: Run the below command to verify the maintenance mode status of the RDAF worker services.

Step-6: Run the below command to delete the Terminating RDAF worker service PODs

for i in `kubectl get pods -n rda-fabric -l app_component=rda-worker | grep 'Terminating' | awk '{print $1}'`; do kubectl delete pod $i -n rda-fabric --force; done

Note

Wait for 120 seconds between each RDAF worker service upgrade by repeating above steps from Step-2 to Step-6 for rest of the RDAF worker service PODs.

Step-7: Please wait for 120 seconds to let the newer version of RDA Worker service PODs join the RDA Fabric appropriately. Run the below commands to verify the status of the newer RDA Worker service PODs.

+------------+----------------+---------------+--------------+---------+

| Name | Host | Status | Container Id | Tag |

+------------+----------------+---------------+--------------+---------+

| rda-worker | 192.168.108.17 | Up 1 Hour ago | 4b36fc814a3c | 8.0.0 |

| rda-worker | 192.168.108.18 | Up 1 Hour ago | 53c5a8a4c420 | 8.0.0 |

+------------+----------------+---------------+--------------+---------+

Step-8: Run the below command to check if all RDA Worker services has ok status and does not throw any failure messages.

Note

If the worker was deployed in a HTTP proxy environment, please make sure the required HTTP proxy environment variables are added in /opt/rdaf/deployment-scripts/values.yaml file under rda_worker configuration section as shown below before upgrading RDA Worker services.

rda_worker:

mem_limit: 8G

memswap_limit: 8G

privileged: false

environment:

RDA_ENABLE_TRACES: 'no'

RDA_SELF_HEALTH_RESTART_AFTER_FAILURES: 3

http_proxy: "http://test:[email protected]:3128"

https_proxy: "http://test:[email protected]:3128"

HTTP_PROXY: "http://test:[email protected]:3128"

HTTPS_PROXY: "http://test:[email protected]:3128"

- Upgrade RDA Worker Services

Please run the below command to initiate upgrading the RDA Worker Service with zero downtime

Note

timeout <10> mentioned in the above command represents as seconds

Note

The rolling-upgrade option upgrades the Worker services running in high-availability mode on one VM at a time in sequence. It completes the upgrade of Worker services running on VM-1 before upgrading them on VM-2, followed by VM-3, and so on.

After completing the Worker services upgrade on all VMs, it will ask for user confirmation, the user has to provide YES to delete the older version Worker service PODs.

2024-08-12 02:56:11,573 [rdaf.component.worker] INFO - Collecting worker details for rolling upgrade

2024-08-12 02:56:14,301 [rdaf.component.worker] INFO - Rolling upgrade worker on 192.168.133.96

+----------+----------+---------+---------+--------------+-------------+------------+

| Pod ID | Pod Type | Version | Age | Hostname | Maintenance | Pod Status |

+----------+----------+---------+---------+--------------+-------------+------------+

| c8a37db9 | worker | 3.7.2.1 | 3:32:31 | fffe44b43708 | None | True |

+----------+----------+---------+---------+--------------+-------------+------------+

Continue moving above pod to maintenance mode? [yes/no]: yes

2024-08-12 02:57:17,346 [rdaf.component.worker] INFO - Initiating maintenance mode for pod c8a37db9

2024-08-12 02:57:22,401 [rdaf.component.worker] INFO - Waiting for worker to be moved to maintenance.

2024-08-12 02:57:35,001 [rdaf.component.worker] INFO - Following worker container is in maintenance mode

+----------+----------+---------+---------+--------------+-------------+------------+

| Pod ID | Pod Type | Version | Age | Hostname | Maintenance | Pod Status |

+----------+----------+---------+---------+--------------+-------------+------------+

| c8a37db9 | worker | 3.7.2.1 | 3:33:52 | fffe44b43708 | maintenance | False |

+----------+----------+---------+---------+--------------+-------------+------------+

2024-08-12 02:57:35,002 [rdaf.component.worker] INFO - Waiting for timeout of 3 seconds.

Please run the below command to initiate upgrading the RDA Worker Service without zero downtime

Please wait for 120 seconds to let the newer version of RDA Worker service containers join the RDA Fabric appropriately. Run the below commands to verify the status of the newer RDA Worker service containers.

| Infra | worker | True | 6eff605e72c4 | a318f394 | rda-site-01 | 13:45:13 | 4 | 31.21 | 0 | 0 |

| Infra | worker | True | ae7244d0d10a | 554c2cd8 | rda-site-01 | 13:40:40 | 4 | 31.21 | 0 | 0 |

+------------+----------------+---------------+--------------+---------+

| Name | Host | Status | Container Id | Tag |

+------------+----------------+---------------+--------------+---------+

| rda_worker | 192.168.133.92 | Up 10 hours | 778cd6641abf | 8.0.0 |

| rda_worker | 192.168.133.96 | Up 10 hours | 998ebea682fa | 8.0.0 |

+------------+----------------+---------------+--------------+---------+

+-----------+----------------------------------------+--------------+----------+-------------+-----------------------------------------------------+----------+-----------------------------------------------------------------------------------------------------------------------------+

| Cat | Pod-Type | Host | ID | Site | Health Parameter | Status | Message |

|-----------+----------------------------------------+--------------+----------+-------------+-----------------------------------------------------+----------+-----------------------------------------------------------------------------------------------------------------------------|

| rda_infra | api-server | 1b0542719618 | 1845ae67 | | service-status | ok | |

| rda_infra | api-server | 1b0542719618 | 1845ae67 | | minio-connectivity | ok | |

| rda_infra | api-server | d4404cffdc7a | a4cfdc6d | | service-status | ok | |

| rda_infra | api-server | d4404cffdc7a | a4cfdc6d | | minio-connectivity | ok | |

| rda_infra | asm | 8d3d52a7a475 | 418c9dc1 | | service-status | ok | |

| rda_infra | asm | 8d3d52a7a475 | 418c9dc1 | | minio-connectivity | ok | |

| rda_infra | asm | ab172a9b8229 | 2ac1d67a | | service-status | ok | |

| rda_infra | asm | ab172a9b8229 | 2ac1d67a | | minio-connectivity | ok | |

| rda_app | asset-dependency | 6ac69ca1085c | c2e9dcb9 | | service-status | ok | |

| rda_app | asset-dependency | 6ac69ca1085c | c2e9dcb9 | | minio-connectivity | ok | |

| rda_app | asset-dependency | 58a5f4f460d3 | 0b91caac | | service-status | ok | |

| rda_app | asset-dependency | 58a5f4f460d3 | 0b91caac | | minio-connectivity | ok | |

| rda_app | authenticator | 9011c2aef498 | 9f7efdc3 | | service-status | ok | |

| rda_app | authenticator | 9011c2aef498 | 9f7efdc3 | | minio-connectivity | ok | |

| rda_app | authenticator | 9011c2aef498 | 9f7efdc3 | | DB-connectivity | ok | |

| rda_app | authenticator | 148621ed8c82 | dbf16b82 | | service-status | ok | |

| rda_app | authenticator | 148621ed8c82 | dbf16b82 | | minio-connectivity | ok | |

| rda_app | authenticator | 148621ed8c82 | dbf16b82 | | DB-connectivity | ok | |

| rda_app | cfx-app-controller | 75ec0f30cfa3 | 1198fdee | | service-status | ok | |

| rda_app | cfx-app-controller | 75ec0f30cfa3 | 1198fdee | | minio-connectivity | ok | |

| rda_app | cfx-app-controller | 75ec0f30cfa3 | 1198fdee | | service-initialization-status | ok | |

| rda_app | cfx-app-controller | 75ec0f30cfa3 | 1198fdee | | DB-connectivity | ok |

+-----------+----------------------------------------+--------------+----------+-------------+-----------------------------------------------------+----------+-----------------------------------------------------------------------------------------------------------------------------+

1.2.7 Upgrade OIA Application Services

Step-1: Run the below commands to initiate upgrading RDAF OIA Application services

Step-2: Run the below command to check the status of the newly upgraded PODs.

Step-3: Run the below command to put all Terminating OIA application service PODs into maintenance mode. It will list all of the POD Ids of OIA application services along with rdac maintenance command that are required to be put in maintenance mode.

Step-4: Copy & Paste the rdac maintenance command as below.

Step-5: Run the below command to verify the maintenance mode status of the OIA application services.

Step-6: Run the below command to delete the Terminating OIA application service PODs

for i in `kubectl get pods -n rda-fabric -l app_name=oia | grep 'Terminating' | awk '{print $1}'`; do kubectl delete pod $i -n rda-fabric --force; done

Note

Wait for 120 seconds and Repeat above steps from Step-2 to Step-6 for rest of the OIA application service PODs.

Please wait till all of the new OIA application service PODs are in Running state and run the below command to verify their status and make sure they are running with 8.0.0 version.

+--------------------+----------------+-------------------+--------------+---------+

| Name | Host | Status | Container Id | Tag |

+--------------------+----------------+-------------------+--------------+---------+

| rda-alert-ingester | 192.168.131.47 | Up 54 Minutes ago | 4c38f1f1ab76 | 8.0.0 |

| rda-alert-ingester | 192.168.131.49 | Up 49 Minutes ago | 2c55eda2dd7a | 8.0.0 |

| rda-alert- | 192.168.131.49 | Up 44 Minutes ago | 8319c5927e29 | 8.0.0 |

| processor | | | |

| rda-alert- | 192.168.131.50 | Up 54 Minutes ago | e99d07f8bcd6 | 8.0.0 |

| processor | | | |

| rda-alert- | 192.168.131.47 | Up 54 Minutes ago | d16d8fae566c | 8.0.0 |

| processor- | | | |

| companion | | | |

| rda-alert- | 192.168.131.49 | Up 48 Minutes ago | 16f12b91060d | 8.0.0 |

| processor- | | | |

| companion | | | |

| rda-app-controller | 192.168.131.47 | Up 54 Minutes ago | 658a64049e35 | 8.0.0 |

| rda-app-controller | 192.168.131.46 | Up 54 Minutes ago | 1c27230025a1 | 8.0.0 |

| rda-collaboration | 192.168.131.49 | Up 43 Minutes ago | 32ea58ca8e39 | 8.0.0 |

| rda-collaboration | 192.168.131.50 | Up 53 Minutes ago | 67a5e5ef8c1d | 8.0.0 |

| rda-configuration- | 192.168.131.46 | Up 54 Minutes ago | af292efd663c | 8.0.0 |

| service | | | |

| rda-configuration- | 192.168.131.49 | Up 51 Minutes ago | 7b23b8f033a6 | 8.0.0 |

| service | | | |

+--------------------+----------------+-------------------+--------------+---------+

Step-7: Run the below command to verify all OIA application services are up and running.

+-------+----------------------------------------+-------------+----------------+----------+-------------+----------+--------+--------------+---------------+--------------+

| Cat | Pod-Type | Pod-Ready | Host | ID | Site | Age | CPUs | Memory(GB) | Active Jobs | Total Jobs |

|-------+----------------------------------------+-------------+----------------+----------+-------------+----------+--------+--------------+---------------+--------------|

| App | alert-ingester | True | rda-alert-inge | 6a6e464d | | 19:19:06 | 8 | 31.33 | | |

| App | alert-ingester | True | rda-alert-inge | 7f6b42a0 | | 19:19:23 | 8 | 31.33 | | |

| App | alert-processor | True | rda-alert-proc | a880e491 | | 19:19:51 | 8 | 31.33 | | |

| App | alert-processor | True | rda-alert-proc | b684609e | | 19:19:48 | 8 | 31.33 | | |

| App | alert-processor-companion | True | rda-alert-proc | 874f3b33 | | 19:18:54 | 8 | 31.33 | | |

| App | alert-processor-companion | True | rda-alert-proc | 70cadaa7 | | 19:18:35 | 8 | 31.33 | | |

| App | asset-dependency | True | rda-asset-depe | bde06c15 | | 19:44:20 | 8 | 31.33 | | |

| App | asset-dependency | True | rda-asset-depe | 47b9eb02 | | 19:44:08 | 8 | 31.33 | | |

| App | authenticator | True | rda-identity-d | faa33e1b | | 19:44:22 | 8 | 31.33 | | |

| App | authenticator | True | rda-identity-d | 36083c36 | | 19:44:16 | 8 | 31.33 | | |

| App | cfx-app-controller | True | rda-app-contro | 5fd3c3f4 | | 19:19:39 | 8 | 31.33 | | |

| App | cfx-app-controller | True | rda-app-contro | d66e5ce8 | | 19:19:26 | 8 | 31.33 | | |

| App | cfxdimensions-app-access-manager | True | rda-access-man | ecbb535c | | 19:44:16 | 8 | 31.33 | | |

| App | cfxdimensions-app-access-manager | True | rda-access-man | 9a05db5a | | 19:44:06 | 8 | 31.33 | | |

| App | cfxdimensions-app-collaboration | True | rda-collaborat | 61b3c53b | | 19:18:48 | 8 | 31.33 | | |

| App | cfxdimensions-app-collaboration | True | rda-collaborat | 09b9474e | | 19:18:27 | 8 | 31.33 | | |

+-------+----------------------------------------+-------------+----------------+----------+-------------+-------------------+--------+-----------------------------+--------------+

Run the below command to check if all services has ok status and does not throw any failure messages.

+-----------+----------------------------------------+--------------+----------+-------------+-----------------------------------------------------+----------+-----------------------------------------------------------------------------------------------------------------------------+

| Cat | Pod-Type | Host | ID | Site | Health Parameter | Status | Message |

|-----------+----------------------------------------+--------------+----------+-------------+-----------------------------------------------------+----------+-----------------------------------------------------------------------------------------------------------------------------|

| rda_app | alert-ingester | rda-alert-in | 6a6e464d | | service-status | ok | |

| rda_app | alert-ingester | rda-alert-in | 6a6e464d | | minio-connectivity | ok | |

| rda_app | alert-ingester | rda-alert-in | 6a6e464d | | service-dependency:configuration-service | ok | 2 pod(s) found for configuration-service |

| rda_app | alert-ingester | rda-alert-in | 6a6e464d | | service-initialization-status | ok | |

| rda_app | alert-ingester | rda-alert-in | 6a6e464d | | kafka-connectivity | ok | Cluster=dKnnkaYSPELK8DBUk0rPig, Broker=0, Brokers=[0, 1, 2] |

| rda_app | alert-ingester | rda-alert-in | 6a6e464d | | kafka-consumer | ok | Health: [{'387c0cb507b84878b9d0b15222cb4226.inbound-events': 0, '387c0cb507b84878b9d0b15222cb4226.mapped-events': 0}, {}] |

| rda_app | alert-ingester | rda-alert-in | 7f6b42a0 | | service-status | ok | |

| rda_app | alert-ingester | rda-alert-in | 7f6b42a0 | | minio-connectivity | ok | |

| rda_app | alert-ingester | rda-alert-in | 7f6b42a0 | | service-dependency:configuration-service | ok | 2 pod(s) found for configuration-service |

| rda_app | alert-ingester | rda-alert-in | 7f6b42a0 | | service-initialization-status | ok | |

| rda_app | alert-ingester | rda-alert-in | 7f6b42a0 | | kafka-consumer | ok | Health: [{'387c0cb507b84878b9d0b15222cb4226.inbound-events': 0, '387c0cb507b84878b9d0b15222cb4226.mapped-events': 0}, {}] |

| rda_app | alert-ingester | rda-alert-in | 7f6b42a0 | | kafka-connectivity | ok | Cluster=dKnnkaYSPELK8DBUk0rPig, Broker=1, Brokers=[0, 1, 2] |

| rda_app | alert-processor | rda-alert-pr | a880e491 | | service-status | ok | |

| rda_app | alert-processor | rda-alert-pr | a880e491 | | minio-connectivity | ok | |

| rda_app | alert-processor | rda-alert-pr | a880e491 | | service-dependency:cfx-app-controller | ok | 2 pod(s) found for cfx-app-controller |

| rda_app | alert-processor | rda-alert-pr | a880e491 | | service-dependency:configuration-service | ok | 2 pod(s) found for configuration-service |

| rda_app | alert-processor | rda-alert-pr | a880e491 | | service-initialization-status | ok | |

| rda_app | alert-processor | rda-alert-pr | a880e491 | | kafka-connectivity | ok | Cluster=dKnnkaYSPELK8DBUk0rPig, Broker=1, Brokers=[0, 1, 2] |

| rda_app | alert-processor | rda-alert-pr | a880e491 | | DB-connectivity | ok | |

+-----------+----------------------------------------+--------------+----------+-------------+-----------------------------------------------------+----------+-------------------------------------------------------------+

Run the below commands to initiate upgrading the RDA Fabric OIA Application services with zero downtime

Note

timeout <10> mentioned in the above command represents as Seconds

Note

The rolling-upgrade option upgrades the OIA application services running in high-availability mode on one VM at a time in sequence. It completes the upgrade of OIA application services running on VM-1 before upgrading them on VM-2, followed by VM-3, and so on.

After completing the OIA application services upgrade on all VMs, it will ask for user confirmation to delete the older version OIA application service PODs.

2024-08-12 03:18:08,705 [rdaf.component.oia] INFO - Gathering OIA app container details.

2024-08-12 03:18:10,719 [rdaf.component.oia] INFO - Gathering rdac pod details.

+----------+----------------------+---------+---------+--------------+-------------+------------+

| Pod ID | Pod Type | Version | Age | Hostname | Maintenance | Pod Status |

+----------+----------------------+---------+---------+--------------+-------------+------------+

| 2992fe69 | cfx-app-controller | 7.7.2 | 3:44:53 | 0500f773a8ff | None | True |

| 336138c8 | reports-registry | 7.7.2 | 3:44:12 | 92a5e0daa942 | None | True |

| ccc5f3ce | cfxdimensions-app- | 7.7.2 | 3:43:34 | 99192de47ea4 | None | True |

| | notification-service | | | | | |

| 03614007 | cfxdimensions-app- | 7.7.2 | 3:42:54 | fbdf4e5c16c3 | None | True |

| | file-browser | | | | | |

| a4949804 | configuration- | 7.7.2 | 3:42:15 | 4ea08c8cbf2e | None | True |

| | service | | | | | |

| 8f37c520 | alert-ingester | 7.7.2 | 3:41:35 | e9e3a3e69cac | None | True |

| 249b7104 | webhook-server | 7.7.2.1 | 3:12:04 | 1df43cebc888 | None | True |

| 76c64336 | smtp-server | 7.7.2.1 | 3:08:57 | 03725b0cb91f | None | True |

| ad85cb4c | event-consumer | 7.7.2.1 | 3:09:58 | 8a7d349da513 | None | True |

| 1a788ef3 | alert-processor | 7.7.2.1 | 3:11:01 | a7c5294cba3d | None | True |

| 970b90b1 | cfxdimensions-app- | 7.7.2 | 3:38:14 | 01d4245bb90e | None | True |

| | irm_service | | | | | |

| 153aa6ac | ml-config | 7.7.2 | 3:37:33 | 10d5d6766354 | None | True |

| 5aa927a4 | cfxdimensions-app- | 7.7.2 | 3:36:53 | dcfda7175cb5 | None | True |

| | collaboration | | | | | |

| 6833aa86 | ingestion-tracker | 7.7.2 | 3:36:13 | ef0e78252e48 | None | True |

| afe77cb9 | alert-processor- | 7.7.2 | 3:35:33 | 6f03c7fdba51 | None | True |

| | companion | | | | | |

+----------+----------------------+---------+---------+--------------+-------------+------------+

Continue moving above pods to maintenance mode? [yes/no]: yes

2024-08-12 03:18:27,159 [rdaf.component.oia] INFO - Initiating Maintenance Mode...

2024-08-12 03:18:32,978 [rdaf.component.oia] INFO - Waiting for services to be moved to maintenance.

2024-08-12 03:18:55,771 [rdaf.component.oia] INFO - Following container are in maintenance mode

+----------+----------------------+---------+---------+--------------+-------------+------------+

Run the below command to initiate upgrading the RDA Fabric OIA Application services without zero downtime

Please wait till all of the new OIA application service containers are in Up state and run the below command to verify their status and make sure they are running with 8.0.0 version.

+--------------------+------------ --+------------+---------------+--------+

| Name | Host | Status | Container Id | Tag |

+--------------------+------------ --+------------+---------------+--------+

| cfx-rda-app- | 192.168.133.96 | Up 10 hours | e0a3b011092b | 8.0.0 |

| controller | | | | |

| cfx-rda-app- | 192.168.133.92 | Up 10 hours | dd729df4567f | 8.0.0 |

| controller | | | | |

| cfx-rda-reports- | 192.168.133.96 | Up 10 hours | d62ddb342bc2 | 8.0.0 |

| registry | | | | |

| cfx-rda-reports- | 192.168.133.92 | Up 10 hours | 4b30336152fe | 8.0.0 |

| registry | | | | |

| cfx-rda- | 192.168.133.96 | Up 10 hours | 6f2a8c2ff9fa | 8.0.0 |

| notification- | | | | |

| service | | | | |

| cfx-rda- | 192.168.133.92 | Up 10 hours | 4fbfe27f8006 | 8.0.0 |

| notification- | | | | |

| service | | | | |

| cfx-rda-file- | 192.168.133.96 | Up 10 hours | bd41100a456c | 8.0.0 |

| browser | | | | |

| cfx-rda-file- | 192.168.133.92 | Up 10 hours | e420ec5ee26c | 8.0.0 |

| browser | | | | |

| cfx-rda- | 192.168.133.96 | Up 10 hours | 8b4615d2c2e9 | 8.0.0 |

| configuration- | | | | |

| service | | | | |

| cfx-rda- | 192.168.133.92 | Up 10 hours | 4d2d749ec170 | 8.0.0 |

| configuration- | | | | |

| service | | | | |

| cfx-rda-alert- | 192.168.133.96 | Up 10 hours | 595524b429c3 | 8.0.0 |

| ingester | | | | |

| cfx-rda-alert- | 192.168.133.92 | Up 10 hours | 2a3a686a9355 | 8.0.0 |

| ingester | | | | |

+--------------------+----------------+-------------+--------------+--------+

Run the below command to verify all OIA application services are up and running.

+-------+----------------------------------------+-------------+----------------+----------+-------------+----------+--------+--------------+---------------+--------------+

| Cat | Pod-Type | Pod-Ready | Host | ID | Site | Age | CPUs | Memory(GB) | Active Jobs | Total Jobs |

|-------+----------------------------------------+-------------+----------------+----------+-------------+----------+--------+--------------+---------------+--------------|

| App | alert-ingester | True | rda-alert-inge | 6a6e464d | | 19:22:36 | 8 | 31.33 | | |

| App | alert-ingester | True | rda-alert-inge | 7f6b42a0 | | 19:22:53 | 8 | 31.33 | | |

| App | alert-processor | True | rda-alert-proc | a880e491 | | 19:23:21 | 8 | 31.33 | | |

| App | alert-processor | True | rda-alert-proc | b684609e | | 19:23:18 | 8 | 31.33 | | |

| App | alert-processor-companion | True | rda-alert-proc | 874f3b33 | | 19:22:24 | 8 | 31.33 | | |

| App | alert-processor-companion | True | rda-alert-proc | 70cadaa7 | | 19:22:05 | 8 | 31.33 | | |

| App | asset-dependency | True | rda-asset-depe | bde06c15 | | 19:47:50 | 8 | 31.33 | | |

| App | asset-dependency | True | rda-asset-depe | 47b9eb02 | | 19:47:38 | 8 | 31.33 | | |

| App | authenticator | True | rda-identity-d | faa33e1b | | 19:47:52 | 8 | 31.33 | | |

| App | authenticator | True | rda-identity-d | 36083c36 | | 19:47:46 | 8 | 31.33 | | |

| App | cfx-app-controller | True | rda-app-contro | 5fd3c3f4 | | 19:23:09 | 8 | 31.33 | | |

| App | cfx-app-controller | True | rda-app-contro | d66e5ce8 | | 19:22:56 | 8 | 31.33 | | |

| App | cfxdimensions-app-access-manager | True | rda-access-man | ecbb535c | | 19:47:46 | 8 | 31.33 | | |

| App | cfxdimensions-app-access-manager | True | rda-access-man | 9a05db5a | | 19:47:36 | 8 | 31.33 | | |

| App | cfxdimensions-app-collaboration | True | rda-collaborat | 61b3c53b | | 19:22:18 | 8 | 31.33 | | |

| App | cfxdimensions-app-collaboration | True | rda-collaborat | 09b9474e | | 19:21:57 | 8 | 31.33 | | |

| App | cfxdimensions-app-file-browser | True | rda-file-brows | 00495640 | | 19:22:45 | 8 | 31.33 | | |

| App | cfxdimensions-app-file-browser | True | rda-file-brows | 640f0653 | | 19:22:29 | 8 | 31.33 | | |

| App | cfxdimensions-app-irm_service | True | rda-irm-servic | 27e345c5 | | 19:21:43 | 8 | 31.33 | | |

| App | cfxdimensions-app-irm_service | True | rda-irm-servic | 23c7e082 | | 19:21:56 | 8 | 31.33 | | |

| App | cfxdimensions-app-notification-service | True | rda-notificati | bbb5b08b | | 19:23:20 | 8 | 31.33 | | |

| App | cfxdimensions-app-notification-service | True | rda-notificati | 9841bcb5 | | 19:23:02 | 8 | 31.33 | | |

+-------+----------------------------------------+-------------+----------------+----------+-------------+----------+--------+--------------+---------------+--------------+

Run the below command to check if all services has ok status and does not throw any failure messages.

+-----------+----------------------------------------+--------------+----------+-------------+-----------------------------------------------------+----------+-------------------------------------------------------------+

| Cat | Pod-Type | Host | ID | Site | Health Parameter | Status | Message |

|-----------+----------------------------------------+--------------+----------+-------------+-----------------------------------------------------+----------+-------------------------------------------------------------|

| rda_app | alert-ingester | 7f75047e9e44 | daa8c414 | | service-status | ok | |

| rda_app | alert-ingester | 7f75047e9e44 | daa8c414 | | minio-connectivity | ok | |

| rda_app | alert-ingester | 7f75047e9e44 | daa8c414 | | service-dependency:configuration-service | ok | 2 pod(s) found for configuration-service |

| rda_app | alert-ingester | 7f75047e9e44 | daa8c414 | | service-initialization-status | ok | |

| rda_app | alert-ingester | 7f75047e9e44 | daa8c414 | | kafka-connectivity | ok | Cluster=NTc1NWU1MTQxYmY3MTFlZg, Broker=1, Brokers=[1, 2, 3] |

| rda_app | alert-ingester | f9ec55862be0 | f9b9231c | | service-status | ok | |

| rda_app | alert-ingester | f9ec55862be0 | f9b9231c | | minio-connectivity | ok | |

| rda_app | alert-ingester | f9ec55862be0 | f9b9231c | | service-dependency:configuration-service | ok | 2 pod(s) found for configuration-service |

| rda_app | alert-ingester | f9ec55862be0 | f9b9231c | | service-initialization-status | ok | |

| rda_app | alert-ingester | f9ec55862be0 | f9b9231c | | kafka-connectivity | ok | Cluster=NTc1NWU1MTQxYmY3MTFlZg, Broker=2, Brokers=[1, 2, 3] |

| rda_app | alert-processor | c6cc7b04ab33 | b4ebfb06 | | service-status | ok | |

| rda_app | alert-processor | c6cc7b04ab33 | b4ebfb06 | | minio-connectivity | ok | |

+-----------+----------------------------------------+--------------+----------+-------------+-----------------------------------------------------+----------+-------------------------------------------------------------+

1.2.7.1 Upgrade Migrate Datasets

The following Migrate Dataset script has been updated to support special characters in dataset names by now using a hash prefix for dataset objects. To upgrade existing (older) datasets with the hash prefix, an upgrade script is available that fetches the current dataset objects from MinIO and updates them accordingly.

Please run the Python Upgrade Script to Migrate Datasets

Note

After executing successfully, the script will display the count of successful and failed dataset objects, as shown below. If any dataset objects fail, please re-run the script to ensure all dataset objects are migrated.

rdauser@kubofflinereg10812:~$ python rdaf_upgrade_132_140.py migrate_dataset

2025-02-13 14:49:51,668 [PID=829:TID=MainThread:cfx.__main__:add_prefix_to_unhashed_objects:197] INFO - Found 7 unhashed dataset objects to update.

2025-02-13 14:49:51,748 [PID=829:TID=ThreadPoolExecutor-0_0:cfx.__main__:_process_single_dataset:280] INFO - Successfully updated dataset: cfxdm-saved-data/vcenter_159_151-esxi-host-inventory-meta.yml

2025-02-13 14:49:51,800 [PID=829:TID=ThreadPoolExecutor-0_0:cfx.__main__:_process_single_dataset:280] INFO - Successfully updated dataset: cfxdm-saved-data/vcenter_159_151-host-network-inventory-meta.yml

2025-02-13 14:49:51,867 [PID=829:TID=ThreadPoolExecutor-0_0:cfx.__main__:_process_single_dataset:280] INFO - Successfully updated dataset: cfxdm-saved-data/vcenter_159_151-storage-inventory-meta.yml

2025-02-13 14:49:51,927 [PID=829:TID=ThreadPoolExecutor-0_0:cfx.__main__:_process_single_dataset:280] INFO - Successfully updated dataset: cfxdm-saved-data/vcenter_159_151-vcenter-datastore-inventory-meta.yml

2025-02-13 14:49:51,979 [PID=829:TID=ThreadPoolExecutor-0_0:cfx.__main__:_process_single_dataset:280] INFO - Successfully updated dataset: cfxdm-saved-data/vcenter_159_151-vcenter-summary-inventory-meta.yml

2025-02-13 14:49:52,246 [PID=829:TID=ThreadPoolExecutor-0_0:cfx.__main__:_process_single_dataset:280] INFO - Successfully updated dataset: cfxdm-saved-data/vcenter_159_151-vm-inventory-meta.yml

2025-02-13 14:49:52,304 [PID=829:TID=ThreadPoolExecutor-0_0:cfx.__main__:_process_single_dataset:280] INFO - Successfully updated dataset: cfxdm-saved-data/vcenter_159_151-vswitch-inventory-meta.yml

2025-02-13 14:49:52,305 [PID=829:TID=MainThread:cfx.__main__:add_prefix_to_unhashed_objects:222] INFO - Total dataset objects processed: 1501, Success: 1501, Failed: 0

2025-02-13 14:49:52,305 [PID=829:TID=MainThread:cfx.__main__:add_prefix_to_unhashed_objects:226] INFO - Total time taken: 507.64 seconds

Dataset migration script execution completed.

The following Migrate Dataset script has been updated to support special characters in dataset names by now using a hash prefix for dataset objects. To upgrade existing (older) datasets with the hash prefix, an upgrade script is available that fetches the current dataset objects from MinIO and updates them accordingly.

Please run the Python Upgrade Script to Migrate Datasets

Note

After executing successfully, the script will display the count of successful and failed dataset objects, as shown below. If any dataset objects fail, please re-run the script to ensure all dataset objects are migrated.

rdauser@kubofflinereg10812:~$ python rdaf_upgrade_132_140.py migrate_dataset

2025-02-13 14:49:51,668 [PID=829:TID=MainThread:cfx.__main__:add_prefix_to_unhashed_objects:197] INFO - Found 7 unhashed dataset objects to update.

2025-02-13 14:49:51,748 [PID=829:TID=ThreadPoolExecutor-0_0:cfx.__main__:_process_single_dataset:280] INFO - Successfully updated dataset: cfxdm-saved-data/vcenter_159_151-esxi-host-inventory-meta.yml

2025-02-13 14:49:51,800 [PID=829:TID=ThreadPoolExecutor-0_0:cfx.__main__:_process_single_dataset:280] INFO - Successfully updated dataset: cfxdm-saved-data/vcenter_159_151-host-network-inventory-meta.yml

2025-02-13 14:49:51,867 [PID=829:TID=ThreadPoolExecutor-0_0:cfx.__main__:_process_single_dataset:280] INFO - Successfully updated dataset: cfxdm-saved-data/vcenter_159_151-storage-inventory-meta.yml

2025-02-13 14:49:51,927 [PID=829:TID=ThreadPoolExecutor-0_0:cfx.__main__:_process_single_dataset:280] INFO - Successfully updated dataset: cfxdm-saved-data/vcenter_159_151-vcenter-datastore-inventory-meta.yml

2025-02-13 14:49:51,979 [PID=829:TID=ThreadPoolExecutor-0_0:cfx.__main__:_process_single_dataset:280] INFO - Successfully updated dataset: cfxdm-saved-data/vcenter_159_151-vcenter-summary-inventory-meta.yml

2025-02-13 14:49:52,246 [PID=829:TID=ThreadPoolExecutor-0_0:cfx.__main__:_process_single_dataset:280] INFO - Successfully updated dataset: cfxdm-saved-data/vcenter_159_151-vm-inventory-meta.yml

2025-02-13 14:49:52,304 [PID=829:TID=ThreadPoolExecutor-0_0:cfx.__main__:_process_single_dataset:280] INFO - Successfully updated dataset: cfxdm-saved-data/vcenter_159_151-vswitch-inventory-meta.yml

2025-02-13 14:49:52,305 [PID=829:TID=MainThread:cfx.__main__:add_prefix_to_unhashed_objects:222] INFO - Total dataset objects processed: 1501, Success: 1501, Failed: 0

2025-02-13 14:49:52,305 [PID=829:TID=MainThread:cfx.__main__:add_prefix_to_unhashed_objects:226] INFO - Total time taken: 507.64 seconds

Dataset migration script execution completed.

1.2.8 Upgrade Event Gateway Services

Important

This Upgrade is for Non-K8s only

Step 1. Prerequisites

- Event Gateway with 3.7.2 tag should be already installed

Note

If a user deployed the event gateway using the RDAF CLI, follow Step 2 and skip Step 3 or if the user did not deploy event gateway in RDAF CLI go to Step 3

Step 2. Upgrade Event Gateway Using RDAF CLI

-

To upgrade the event gateway, log in to the rdaf cli VM and execute the following command.

Step 3. Upgrade Event Gateway Using Docker Compose File

-

Login to the Event Gateway installed VM

-

Navigate to the location where Event Gateway was previously installed, using the following command

-

Edit the docker-compose file for the Event Gateway using a local editor (e.g. vi) update the tag and save it

version: '3.1' services: rda_event_gateway: image: docker1.cloudfabrix.io:443/external/ubuntu-rda-event-gateway:8.0.0 restart: always network_mode: host mem_limit: 6G memswap_limit: 6G volumes: - /opt/rdaf/network_config:/network_config - /opt/rdaf/event_gateway/config:/event_gw_config - /opt/rdaf/event_gateway/certs:/certs - /opt/rdaf/event_gateway/logs:/logs - /opt/rdaf/event_gateway/log_archive:/tmp/log_archive logging: driver: "json-file" options: max-size: "25m" max-file: "5" environment: RDA_NETWORK_CONFIG: /network_config/rda_network_config.json EVENT_GW_MAIN_CONFIG: /event_gw_config/main/main.yml EVENT_GW_SNMP_TRAP_CONFIG: /event_gw_config/snmptrap/trap_template.json EVENT_GW_SNMP_TRAP_ALERT_CONFIG: /event_gw_config/snmptrap/trap_to_alert_go.yaml AGENT_GROUP: event_gateway_site01 EVENT_GATEWAY_CONFIG_DIR: /event_gw_config LOGGER_CONFIG_FILE: /event_gw_config/main/logging.yml -

Please run the following commands

-

Use the command as shown below to ensure that the RDA docker instances are up and running.

-

Use the below mentioned command to check docker logs for any errors

Tip

In version 3.6.1 or above, the RDA Event Gateway agent introduces enhanced Syslog TCP/UDP endpoints, developed in Go (lang), to boost event processing rates significantly and optimize system resource utilization.

-

New Syslog TCP Endpoint Type: syslog_tcp_go

-

New Syslog UDP Endpoint Type: syslog_udp_go

1.2.9 Install RDAF Bulkstats Services

Note

The RDAF Bulkstats service is optional and only necessary if the Bulkstats data ingestion feature is required. Otherwise, you may ignore the steps below and go to next section.

Run the below command to install bulk_stats services

A comma can be used to identify two hosts for HA Setups.

Note

When deploying bulk stats on New VM, make sure the username and password matches with the existing VM's

Run the below command to get the bulk_stats status

+----------------+----------------+---------------+--------------+-------+

| Name | Host | Status | Container Id | Tag |

+----------------+----------------+---------------+--------------+-------+

| rda-bulk-stats | 192.168.108.13 | Up 1 Days ago | ac3379bfcc9d | 8.0.0 |

| rda-bulk-stats | 192.168.108.14 | Up 1 Days ago | c78283c06d88 | 8.0.0 |

+----------------+----------------+---------------+--------------+-------+

Note

The RDAF Bulkstats service is optional and only necessary if the Bulkstats data ingestion feature is required. Otherwise, you may ignore the steps below and go to next section.

Run the below command to install bulk_stats services

A comma can be used to identify two hosts for HA Setups.

Note

When deploying bulk stats on New VM, make sure the username and password matches with the existing VM's

Run the below command to get the bulk_stats status

+----------------+----------------+-------------+--------------+-------+

| Name | Host | Status | Container Id | Tag |

+----------------+----------------+-------------+--------------+-------+

| rda_bulk_stats | 192.168.133.96 | Up 4 days | 67da2301d30c | 8.0.0 |

| rda_bulk_stats | 192.168.133.92 | Up 46 hours | 32179032bb97 | 8.0.0 |

+----------------+----------------+-------------+--------------+-------+

1.2.9.1 Install RDAF File Object Services

Note

This service is applicable for Non-K8s only, The RDAF File Object service is optional and only necessary if the Bulkstats data ingestion feature is required. Otherwise, you may ignore the steps below and go to next section

Stop file object service using docker-compose file

Remove rda_file_object entries in rdaf.cfg file if bulkstats already deployed with older versions